作者:沈光宇

爱可生南区 DBA 团队成员,主要负责 MySQL 故障处理和性能优化。对技术执着,为客户负责。

本文来源:原创投稿

*爱可生开源社区出品,原创内容未经授权不得随意使用,转载请联系小编并注明来源。

一、背景

某客户MySQL实例中数据较大(上T的数据量),需要将MySQL中数据全量/增量快速迁移至DBLE。TiDB DM支持多线程数据导出导入及增量同步,因此选择了TiDB DM为作数据迁移工具。本文以此案例为背景,介绍使用TiDB DM将数据从MySQL迁移至DBLE的方法及遇到的一些问题。

二、数据迁移示意图及服务器环境

(1)数据迁移示意图如下:

(2)服务器环境如下:

| 服务器IP | 角色 | 端口 | 版本 | 备注 |

|---|---|---|---|---|

| 10.186.65.83 | dm master | 8261/8291 | V2.0.7 | tiup安装节点 |

| 10.186.65.118 | dm worker | 8262 | V2.0.7 | |

| 10.186.65.14 | SRC MySQL | 4490 | MySQL 8.0.18 | |

| 10.186.65.4 | DBLE | 8066 | DBLE 3.21.10.6 | |

| 10.186.65.61 | DBLE datanode | 4408 | MySQL 8.0.25 | |

| 10.186.65.65 | DBLE datanode | 4408 | MySQL 8.0.25 |

三、安装 TiDB DM

(1)准备环境及安装 tiup

#分别在dm master和worker节点主机创建tidb用户,密码为dmadmin,并配置sudo shell> useradd tidb shell> echo "dmadmin" | passwd tidb --stdin shell> echo "tidb ALL=(ALL) NOPASSWD: ALL" > /etc/sudoers.d/tidb #切换至tidb用户,生成密钥,并做ssh信任,dm集群内机器都需要做ssh信任 shell> su - tidb shell> ssh-keygen -t rsa shell> ssh-copy-id -i ~/.ssh/id_rsa.pub tidb@10.186.65.118 shell> ssh-copy-id -i ~/.ssh/id_rsa.pub tidb@10.186.65.83 #安装tiup,在tidb用户下 shell> curl --proto '=https' --tlsv1.2 -sSf https://tiup-mirrors.pingcap.com/install.sh | sh shell> source .bash_profile shell> which tiup ~/.tiup/bin/tiup #安装dmctl shell> tiup install dm dmctl:v2.0.7 component dm version v1.10.2 is already installed download https://tiup-mirrors.pingcap.com/dmctl-v2.0.7-linux-amd64.tar.gz 26.92 MiB / 26.92 MiB 100.00% 10.01 MiB/s

(2)安装dm-cluster

#生成配置模版 shell> tiup dm template > dm_topology.yaml #修改后配置文件如下: shell> cat dm_topology.yaml |grep -v ^[#-] |grep -v ^$ global: user: "tidb" ssh_port: 22 deploy_dir: "/home/tidb/dm/deploy" data_dir: "/home/tidb/dm/data" master_servers: - host: 10.186.65.83 worker_servers: - host: 10.186.65.118 monitoring_servers: - host: 10.186.65.83 grafana_servers: - host: 10.186.65.83 alertmanager_servers: - host: 10.186.65.83 #安装、并启动DM集群 shell> tiup dm deploy dm-test v2.0.7 ./dm_topology.yaml --user tidb -i /home/tidb/.ssh/id_rsa shell> tiup dm start dm-test #查看DM集群 [tidb@10_186_65_83 ~]$ tiup dm display dm-test tiup is checking updates for component dm ... Starting component `dm`: /home/tidb/.tiup/components/dm/v1.10.2/tiup-dm display dm-test Cluster type: dm Cluster name: dm-test Cluster version: v2.0.7 Deploy user: tidb SSH type: builtin Grafana URL: http://10.186.65.83:3000 ID Role Host Ports OS/Arch Status Data Dir Deploy Dir -- ---- ---- ----- ------- ------ -------- ---------- 10.186.65.83:9093 alertmanager 10.186.65.83 9093/9094 linux/x86_64 Up /home/tidb/dm/data/alertmanager-9093 /home/tidb/dm/deploy/alertmanager-9093 10.186.65.83:8261 dm-master 10.186.65.83 8261/8291 linux/x86_64 Healthy|L /home/tidb/dm/data/dm-master-8261 /home/tidb/dm/deploy/dm-master-8261 10.186.65.118:8262 dm-worker 10.186.65.118 8262 linux/x86_64 Free /home/tidb/dm/data/dm-worker-8262 /home/tidb/dm/deploy/dm-worker-8262 10.186.65.83:3000 grafana 10.186.65.83 3000 linux/x86_64 Up - /home/tidb/dm/deploy/grafana-3000 10.186.65.83:9090 prometheus 10.186.65.83 9090 linux/x86_64 Up /home/tidb/dm/data/prometheus-9090 /home/tidb/dm/deploy/prometheus-9090 Total nodes: 5

四、DBLE端配置

(1)db.xml

<?xml version="1.0"?>

<dble:db xmlns:dble="http://dble.cloud/">

<dbGroup name="dbGroup1" rwSplitMode="0" delayThreshold="-1">

<heartbeat>select 1</heartbeat>

<dbInstance name="host_1" url="10.186.65.61:4408" user="sgy" password="QfnHoIeIYL7ZT+EdJMYNuiLw6glbx2hEyrqxK+uxFPU8vja8vZHCLEKvKBOt1vOJdXCCgW7wNidJaTYWORDaUg==" maxCon="2000" minCon="50" primary="true" usingDecrypt="true"></dbInstance>

</dbGroup>

<dbGroup name="dbGroup2" rwSplitMode="0" delayThreshold="-1">

<heartbeat>select 1</heartbeat>

<dbInstance name="host_2" url="10.186.65.65:4408" user="sgy" password="d+rG/82+4h21ARS8D6Gu5MIFQ2UBC0h+ZS0EI7bvWAtTJUBsKuFY8+AVZtW1pZk+qtISoI2WpVt29Z9eqMRP5A==" maxCon="2000" minCon="50" primary="true" usingDecrypt="true"></dbInstance>

</dbGroup>

</dble:db>

#注:用户密码为加密后的字符串

(2)sharding.xml

<?xml version="1.0"?>

<dble:sharding xmlns:dble="http://dble.cloud/" >

<schema name="dm_meta" sqlMaxLimit="-1">

<singleTable name="mysql_sync_to_dble_loader_checkpoint" shardingNode="dm_meta" />

<singleTable name="mysql_sync_to_dble_syncer_checkpoint" shardingNode="dm_meta" />

</schema>

<schema name="sbtest" sqlMaxLimit="-1">

<shardingTable name="sbtest1" shardingNode="dn1,dn2,dn3,dn4" sqlMaxLimit="-1" shardingColumn="id" function="pro_func_jumpHash"></shardingTable>

<shardingTable name="sbtest2" shardingNode="dn1,dn2,dn3,dn4" sqlMaxLimit="-1" shardingColumn="id" function="pro_func_jumpHash"></shardingTable>

<shardingTable name="t1" shardingNode="dn1,dn2,dn3,dn4" sqlMaxLimit="-1" shardingColumn="c1" function="pro_func_jumpHash"></shardingTable>

<shardingTable name="t2" shardingNode="dn1,dn2,dn3,dn4" sqlMaxLimit="-1" shardingColumn="c1" function="pro_func_jumpHash"></shardingTable>

</schema>

<shardingNode name="dn1" dbGroup="dbGroup1" database="dh_dn1"></shardingNode>

<shardingNode name="dn2" dbGroup="dbGroup1" database="dh_dn2"></shardingNode>

<shardingNode name="dn3" dbGroup="dbGroup2" database="dh_dn3"></shardingNode>

<shardingNode name="dn4" dbGroup="dbGroup2" database="dh_dn4"></shardingNode>

<shardingNode name="dm_meta" dbGroup="dbGroup1" database="dm_meta"/>

<function name="pro_func_jumpHash" class="jumpStringHash">

<property name="partitionCount">4</property>

<property name="hashSlice">0:0</property>

</function>

</dble:sharding>

注:需要提前配置好DM同步时保存数据的schema及两张表,表名分别是

dm同步任务名 + _loader_checkpoint 即表mysql_sync_to_dble_loader_checkpoint

dm同步任务名 + _syncer_checkpoint 即表mysql_sync_to_dble_syncer_checkpoint

(3)user.xml

<?xml version="1.0"?>

<dble:user xmlns:dble="http://dble.cloud/">

<managerUser name="root" password="RYQdYYnzbcZlDuhV4LhJxFTM8kbU3d0y183LU+FgRWzNscvvvFPcdmeEKMdswMyzIaA+kObcozliHEYAlT0AjA==" usingDecrypt="true"></managerUser>

<shardingUser name="sz" schemas="sbtest,dm_meta" password="jyaXmGFU+mdTGbUZIVbdEcVwItLPI+Yjxdq4wkOzhAYRB29WGZJd0/PkTJh3ky/v4E2yYoqgUzJXPzPRPiQF0Q==" usingDecrypt="true" readOnly="false" maxCon="1000"></shardingUser>

</dble:user>

#注:用户密码为加密后的字符串

(4)登陆 DBLE 管理端执行创建物理数据库命令

shell> /data/mysql/base/5.7.36/bin/mysql -uroot -padmin -h 10.186.65.4 -P 9066 dble> create database @@shardingNode = 'dn$1-4'; Query OK, 1 row affected (0.06 sec) dble> create database @@shardingNode = 'dm_meta'; Query OK, 1 row affected (0.01 sec)

五、在源端 MySQL 压测创建测试数据

#执行prepare,初始化100W行数据 /usr/share/sysbench/oltp_insert.lua --mysql-db=sbtest --mysql-host=10.186.65.14 --mysql-port=4490 --mysql-user=sgy --mysql-password=admin --tables=2 --table-size=1000000 --threads=16 --time=60 --report-interval=1 --max-requests=0 --percentile=95 --mysql-ignore-errors=1062 prepare sysbench 1.0.17 (using system LuaJIT 2.0.4) Initializing worker threads... Creating table 'sbtest1'... Creating table 'sbtest2'... Inserting 1000000 records into 'sbtest2' Inserting 1000000 records into 'sbtest1' Creating a secondary index on 'sbtest2'... Creating a secondary index on 'sbtest1'... #利用sysbench不断写入数据 shell> /usr/share/sysbench/oltp_read_write.lua --mysql-db=sbtest --mysql-host=10.186.65.14 --mysql-port=4490 --mysql-user=sgy --mysql-password=admin --tables=2 --table-size=2000000 --threads=16 --time=6000 --report-interval=1 --max-requests=0 --percentile=95 --mysql-ignore-errors=1062 run sysbench 1.0.17 (using system LuaJIT 2.0.4) Running the test with following options: Number of threads: 16 Report intermediate results every 1 second(s) Initializing random number generator from current time Initializing worker threads... Threads started! [ 1s ] thds: 16 tps: 585.44 qps: 11916.49 (r/w/o: 8360.33/1282.63/2273.53) lat (ms,95%): 55.82 err/s: 0.00 reconn/s: 0.00 [ 2s ] thds: 16 tps: 744.18 qps: 14819.02 (r/w/o: 10379.16/1609.36/2830.50) lat (ms,95%): 41.85 err/s: 0.00 reconn/s: 0.00 [ 3s ] thds: 16 tps: 605.11 qps: 12144.20 (r/w/o: 8500.54/1321.24/2322.42) lat (ms,95%): 47.47 err/s: 0.00 reconn/s: 0.00 [ 4s ] thds: 16 tps: 773.91 qps: 15558.16 (r/w/o: 10881.71/1737.79/2938.65) lat (ms,95%): 41.10 err/s: 0.00 reconn/s: 0.00

六、启动数据同步任务

(1)配置数据源

#使用dmctl工具生成密码加密字符串,每次执行都会产生不同的加密字符串

shell> tiup dmctl encrypt 'admin'

dmXgktpuF18RP1mDN/B2UkA6pDN9PQ==

#mysql数据库配置文件如下

shell> cat mysql_source_14_4490.yaml

source-id: "mysql_source_14_4490"

from:

host: "10.186.65.14"

port: 4490

user: "sgy"

password: "dmXgktpuF18RP1mDN/B2UkA6pDN9PQ=="

#创建数据源

shell> tiup dmctl --master-addr 10.186.65.83:8261 operate-source create ./mysql_source_14_4490.yaml

Starting component `dmctl`: /home/tidb/.tiup/components/dmctl/v2.0.7/dmctl/dmctl --master-addr 10.186.65.83:8261 operate-source create ./mysql_source_14_4490.yaml

{

"result": true,

"msg": "",

"sources": [

{

"result": true,

"msg": "",

"source": "mysql_source_14_4490",

"worker": "dm-10.186.65.118-8262"

}

]

}

#查看数据源

shell> tiup dmctl --master-addr 10.186.65.83:8261 operate-source show

{

"result": true,

"msg": "",

"sources": [

{

"result": true,

"msg": "",

"source": "mysql_source_14_4490",

"worker": "dm-10.186.65.118-8262"

}

]

}

(2)配置同步任务

#同步配置文件如下

shell> cat mysql_sync_to_dble.yaml

name: "mysql_sync_to_dble" #同步任务名

task-mode: "all" #全量 + Binlog 实时同步

clean-dump-file: true

timezone: "Asia/Shanghai"

ignore-checking-items: ["auto_increment_ID"]

target-database:

host: "10.186.65.4" #DBLE主机IP地址

port: 8066 #DBLE流量端口

user: "sz" #连接DBLE的用户

password: "KRfSNtdxe63tehpnCYoCz0ABdUGivg==" #连接DBLE用户密码,用tiup dmctl encrypt 'password'生成

mysql-instances:

-

source-id: "mysql_source_14_4490"

block-allow-list: "global"

mydumper-config-name: "global"

loader-config-name: "global"

syncer-config-name: "global"

block-allow-list:

global:

do-dbs: ["sbtest"] #只迁移sbtest库

mydumpers:

global:

extra-args: "-B sbtest" #只dump sbtest库

loaders:

global:

pool-size: 8

syncers:

global:

worker-count: 8

#更详细配置说明参考官方文档:

https://docs.pingcap.com/zh/tidb-data-migration/v2.0/task-configuration-file-full#%E5%AE%8C%E6%95%B4%E9%85%8D%E7%BD%AE%E6%96%87%E4%BB%B6%E7%A4%BA%E4%BE%8B

#检查配置文件

shell> tiup dmctl:v2.0.7 --master-addr=10.186.65.83:8261 check-task ./mysql_sync_to_dble.yaml

Starting component `dmctl`: /home/tidb/.tiup/components/dmctl/v2.0.7/dmctl/dmctl --master-addr=10.186.65.83:8261 check-task ./mysql_sync_to_dble.yaml

{

"result": true,

"msg": "check pass!!!"

}

#启动同步任务

shell> tiup dmctl:v2.0.7 --master-addr=10.186.65.83:8261 start-task ./mysql_sync_to_dble.yaml

Starting component `dmctl`: /home/tidb/.tiup/components/dmctl/v2.0.7/dmctl/dmctl --master-addr=10.186.65.83:8261 start-task ./mysql_sync_to_dble.yaml

{

"result": true,

"msg": "",

"sources": [

{

"result": true,

"msg": "",

"source": "mysql_source_14_4490",

"worker": "dm-10.186.65.118-8262"

}

]

}

#在dm-worker节点查看导出的SQL文件,

shell> ls -lh /home/tidb/dm/deploy/dm-worker-8262/dumped_data.mysql_sync_to_dble

total 384M

-rw-r--r-- 1 tidb tidb 187 Aug 5 14:04 metadata

-rw-r--r-- 1 tidb tidb 48M Aug 5 14:04 sbtest.sbtest1.0000000000000.sql

-rw-r--r-- 1 tidb tidb 48M Aug 5 14:04 sbtest.sbtest1.0000000010000.sql

-rw-r--r-- 1 tidb tidb 48M Aug 5 14:04 sbtest.sbtest1.0000000020000.sql

-rw-r--r-- 1 tidb tidb 48M Aug 5 14:04 sbtest.sbtest1.0000000030000.sql

-rw-r--r-- 1 tidb tidb 369 Aug 5 14:04 sbtest.sbtest1-schema.sql

-rw-r--r-- 1 tidb tidb 48M Aug 5 14:04 sbtest.sbtest2.0000000000000.sql

-rw-r--r-- 1 tidb tidb 48M Aug 5 14:04 sbtest.sbtest2.0000000010000.sql

-rw-r--r-- 1 tidb tidb 48M Aug 5 14:04 sbtest.sbtest2.0000000020000.sql

-rw-r--r-- 1 tidb tidb 48M Aug 5 14:04 sbtest.sbtest2.0000000030000.sql

-rw-r--r-- 1 tidb tidb 369 Aug 5 14:04 sbtest.sbtest2-schema.sql

-rw-r--r-- 1 tidb tidb 152 Aug 5 14:04 sbtest-schema-create.sql

#创建库SQL文件

shell> head /home/tidb/dm/deploy/dm-worker-8262/dumped_data.mysql_sync_to_dble/sbtest-schema-create.sql

/*!40101 SET NAMES binary*/;

CREATE DATABASE `sbtest` /*!40100 DEFAULT CHARACTER SET utf8mb4 COLLATE utf8mb4_bin */ /*!80016 DEFAULT ENCRYPTION='N' */;

#创建表SQL文件

shell> head /home/tidb/dm/deploy/dm-worker-8262/dumped_data.mysql_sync_to_dble/sbtest.sbtest1-schema.sql

/*!40101 SET NAMES binary*/;

CREATE TABLE `sbtest1` (

`id` int(11) NOT NULL AUTO_INCREMENT,

`k` int(11) NOT NULL DEFAULT '0',

`c` char(120) COLLATE utf8mb4_bin NOT NULL DEFAULT '',

`pad` char(60) COLLATE utf8mb4_bin NOT NULL DEFAULT '',

PRIMARY KEY (`id`),

KEY `k_1` (`k`)

) ENGINE=InnoDB AUTO_INCREMENT=1000001 DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_bin;

#dump生成批量插入数据SQL文件

shell> head /home/tidb/dm/deploy/dm-worker-8262/dumped_data.mysql_sync_to_dble/sbtest.sbtest1.0000000000000.sql

/*!40101 SET NAMES binary*/;

INSERT INTO `sbtest1` (`id`,`k`,`c`,`pad`) VALUES

(1,498670,'31451373586-15688153734-79729593694-96509299839-83724898275-86711833539-78981337422-35049690573-51724173961-87474696253','98996621624-36689827414-04092488557-09587706818-65008859162'),

(2,497778,'21472970079-70972780322-70018558993-71769650003-09270326047-32417012031-10768856803-14235120402-93989080412-18690312264','04776826683-45880822084-77922711547-29057964468-76514263618'),

(3,498956,'49376827441-24903985029-56844662308-79012577859-40518387141-60588419212-24399130405-42612257832-29494881732-71506024440','26843035807-96849339132-53943793991-69741192222-48634174017'),

(4,518727,'85762858421-36258200885-10758669419-44272723583-12529521893-95630803635-53907705724-07005352902-43001596772-53048338959','37979424284-37912826784-31868864947-42903702727-96097885121'),

(5,502480,'24805466175-85245528617-94635882649-46305216925-28637832581-03224489581-68883711727-95491561683-91969681472-12022277774','19288959552-55556468076-14192290426-55457672510-18043372364'),

(6,500774,'52892836230-54177743992-01821871718-48412537487-30066596248-87215430797-00375777469-64498831720-58542556455-90784765418','59487960480-08453890592-99628797439-16757639138-29377916560'),

(7,501466,'85820931248-14475640036-11980694501-86588543167-31029306229-09626867980-90685354565-02350460358-25863585366-53793794448','26081374730-86321700986-51212137094-30635959762-03880194434'),

(8,497838,'81578049255-33453976301-67096870761-27658738403-30546242249-53677469854-26594573136-34292002037-52736825353-99165193170','64289062455-51067794311-09919261228-11533354367-07401173317'),

(3)查看同步任务状态

shell> tiup dmctl:v2.0.7 --master-addr=10.186.65.83:8261 query-status ./mysql_sync_to_dble.yaml

Starting component `dmctl`: /home/tidb/.tiup/components/dmctl/v2.0.7/dmctl/dmctl --master-addr=10.186.65.83:8261 query-status ./mysql_sync_to_dble.yaml

{

"result": true,

"msg": "",

"sources": [

{

"result": true,

"msg": "",

"sourceStatus": {

"source": "mysql_source_14_4490",

"worker": "dm-10.186.65.118-8262",

"result": null,

"relayStatus": null

},

"subTaskStatus": [

{

"name": "mysql_sync_to_dble",

"stage": "Running",

"unit": "Sync",

"result": null,

"unresolvedDDLLockID": "",

"sync": {

"totalEvents": "425355",

"totalTps": "1203",

"recentTps": "2386",

"masterBinlog": "(mysql-bin.000027, 76114275)",

"masterBinlogGtid": "c7827165-bf89-11ec-92e6-02000aba410e:1-5091258",

"syncerBinlog": "(mysql-bin.000026, 229462770)",

"syncerBinlogGtid": "",

"blockingDDLs": [

],

"unresolvedGroups": [

],

"synced": false,

"binlogType": "remote",

"secondsBehindMaster": "38"

}

}

]

}

]

}

七、验证数据

#暂停先前执行的sysbench程序,确保dm同步与源端无延迟时,对表作count(*) #在源端MySQL,对表进行count(*) mysql> select count(*) from sbtest.sbtest1; +----------+ | count(*) | +----------+ | 1116471 | +----------+ 1 row in set (0.68 sec) mysql> select count(*) from sbtest.sbtest2; +----------+ | count(*) | +----------+ | 1117020 | +----------+ 1 row in set (0.54 sec) #通过dble对表进行count(*) dble> select count(*) from sbtest.sbtest1; +----------+ | count(*) | +----------+ | 1116471 | +----------+ 1 row in set (1.04 sec) dble> select count(*) from sbtest.sbtest2; +----------+ | count(*) | +----------+ | 1117020 | +----------+ 1 row in set (1.58 sec) #在源端数据进行更新操作 mysql> update sbtest.sbtest1 set c=uuid() where id=20; Query OK, 1 row affected (0.01 sec) Rows matched: 1 Changed: 1 Warnings: 0 mysql> select * from sbtest.sbtest1 where id=20; +----+--------+--------------------------------------+-------------------------------------------------------------+ | id | k | c | pad | +----+--------+--------------------------------------+-------------------------------------------------------------+ | 20 | 501448 | 24649f0f-14d1-11ed-b4f2-02000aba410e | 91052688950-96415657187-00012408429-12357288330-41295735957 | +----+--------+--------------------------------------+-------------------------------------------------------------+ 1 row in set (0.00 sec) #通过dble查询数据 dble> select * from sbtest.sbtest1 where id=20; +----+--------+--------------------------------------+-------------------------------------------------------------+ | id | k | c | pad | +----+--------+--------------------------------------+-------------------------------------------------------------+ | 20 | 501448 | 24649f0f-14d1-11ed-b4f2-02000aba410e | 91052688950-96415657187-00012408429-12357288330-41295735957 | +----+--------+--------------------------------------+-------------------------------------------------------------+ 1 row in set (0.01 sec)

八、注意事项

(1)TiDB DM版本要求:TiDB DM v2.0.7

(2)在进行数据量导入时需要关闭DBLE慢查询日志

#由于数据同步在全量导入阶段会产生大量慢查询,需要在执行同步任务之前先关闭DBLE的慢查询日志 shell> mysql --prompt='dble>' -uroot -p -h 10.186.65.4 -P 9066 #查看慢查询日志状态,1表示开启,0表示关闭 dble> show @@slow_query_log; +------------------+ | @@slow_query_log | +------------------+ | 1 | +------------------+ 1 row in set (0.00 sec) #全量数据导入前,关闭dble慢查询日志 dble> disable @@slow_query_log; Query OK, 1 row affected (0.01 sec) disable slow_query_log success #待全量数据导入完成后,开启dble慢查询日志 dble> enable @@slow_query_log; Query OK, 1 row affected (0.01 sec) enable slow_query_log success

(3)检查源端 MySQL 实例上是否有运行类似 pt-kill 的程序

#如果在源端MySQL实例上运行有pt-kill或类似的程序,DM同步任务在执行全量数据dump阶段,

导出线程可能会被程序kill掉,导致全备失败。dm-worker.log日志如下:

[ERROR] [subtask.go:311] ["unit process error"] [subtask=oms-sync-receiver] [unit=Dump] ["error information"="{\"ErrCode\":32001,\"ErrClass\":\"dump-unit\",\"ErrScope\":\"internal\",\"ErrLevel\":\"high\",\"Message\":\"mydumper/dumpling runs with error, with output (may empty): \",\"RawCause\":\"invalid connection\"}"]

解决方法:先将pt-kill实用工具停止,然后再重新运行TiDM同步任务,待全量数据dump完成后在开启pt-kill。

(4)迁移时如需对目标表表结构进行修改,如源表有建立分区而目标表通过分片后不需要建立分区、修改表字符集(建议使用utf8mb4)等,可先将表结构从源库导出,修改后导入 DBLE 或导入 DBLE 后在修改表结构,最后再开启 DM 同步任务。

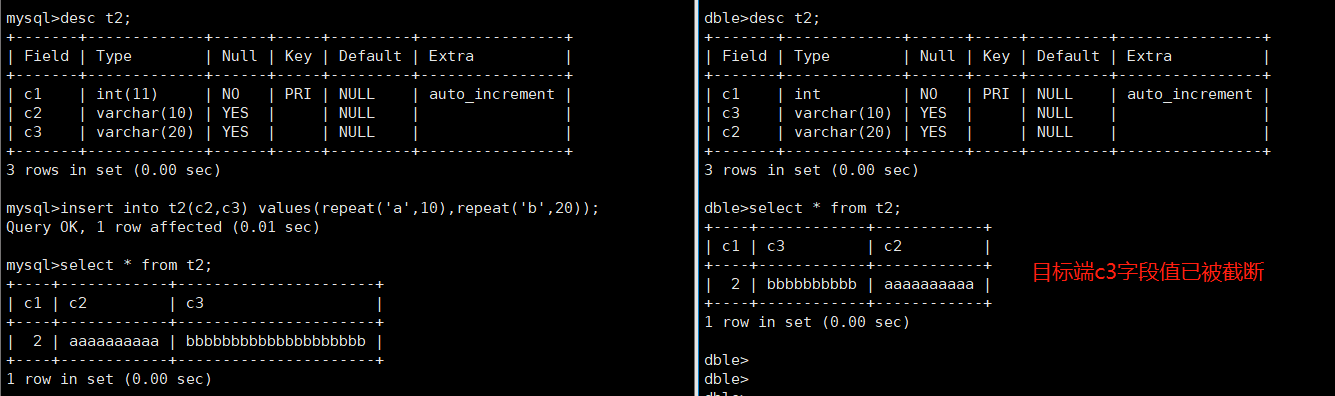

(5)源、目标表字段顺序必须要一致,否则可能会导致数据不一致,两字段同为 varchar 并且长度不相等时,同步任务并不会报错,但写入目标表的值可能会被截断,如下图:

DM 任务启动后连接目标端时,会将 session 级别 sql_mode 设置成下面值: sql_mode=’IGNORE_SPACE,NO_AUTO_VALUE_ON_ZERO,NO_ENGINE_SUBSTITUTION,ALLOW_INVALID_DATES,ONLY_FULL_GROUP_BY’;